Human-Centric Agile Disciplines for AI Code Generation

XP 2026 · Industry & Practice · São Paulo

Ken Judy · Senior Partner, Stride Consulting

kenjudy.us · stride.build

Ken Judy · Senior Partner, Stride Consulting

kenjudy.us · stride.build

kenjudy.us | stride.build

Agenda

01The Problem

02Why Review Fails

03The Framework

04Live Demo

05Measurement

06Discussion

Today's Session

How a classic Plan-Do-Check-Act cycle using XP disciplines closes the accountability gap in AI-assisted development.

Evidence, framework, live demo, and discussion. 90 minutes.

kenjudy.us | stride.build

01

The Problem

The tools are working. The outcomes are not.

kenjudy.us | stride.build

AI Coding Is Now Standard Practice

90%

use AI at work

DevOps Research and Assessment 2025

~5,000 technology professionals

Conducted by Google Cloud

~5,000 technology professionals

Conducted by Google Cloud

80%+

report productivity gains

DORA 2025

61%

never use agent mode

DORA 2025

Chat and predictive text completion are where developers actually spend their time with AI.

kenjudy.us | stride.build

Individual Gains Do Not Equal Team Delivery

Individual Gains

80%+ report productivity gains

59% report code quality improvements

70% report at least some confidence in generated code quality

DORA 2025

Team Delivery

2024: Every 25% increase in adoption leads to 7.2% reduction in delivery stability — how often deployments cause failures, outages, or require rollbacks.

2024: -1.5% net decrease in delivery throughput. 2025: a small net positive increase with high uncertainty.

DORA 2024/2025

kenjudy.us | stride.build

"The value of AI is not going to be unlocked by the technology itself, but by reimagining the system of work it inhabits."

— DORA 2025

kenjudy.us | stride.build

AI Adoption Is Degrading Code Quality

10x

duplicated code blocks 2022–2024

GitClear 2025

211M lines of code

211M lines of code

18%

of inconsistent clone changes contain faults, varying by system type

Juergens et al.

18.42%

of buggy code clones propagate to copies

Mondal et al.

"Growing Evidence that AI-Generated Code Optimizes for the Short-Term." — GitClear

kenjudy.us | stride.build

02

Why Code Review Fails

The accountability gap and what research shows code review is actually good at.

kenjudy.us | stride.build

Code Review Was Never Good at Finding Bugs

What We Expect Code Review to Do

Find defects

Catch correctness and security issues

What Review Actually Delivers

Understanding the change

Code improvements and readability

Team norms and knowledge transfer

Bacchelli & Bird, ICSE 2013 · Sadowski et al., ICSE-SEIP 2018

kenjudy.us | stride.build

Finding defects was the top motivation for 44% of developers while only 14% of review comments address defects and most of those are small logical low-level issues

Bacchelli & Bird, ICSE 2013

kenjudy.us | stride.build

AI Makes This Worse at Every Level

Speed:

Controlled studies show developers complete tasks up to 55.8% faster with AI assistance. (Peng et al. 2023)

Size:

AI-generated code arrives in larger batches with a 10x increase in duplicate code blocks between 2022 and 2024. (GitClear 2025)

Confidence:

39% of developers report little or no trust in AI-generated code quality. (DORA 2024)

kenjudy.us | stride.build

03

PDCA

Applying XP disciplines in Plan-Do-Check-Act at the human-AI interaction level

kenjudy.us | stride.build

Structured Prompting Works

61%

Defect reduction from Plan-Do-Check-Act cycle in software development (in a case study)

Ning et al., 2010

1–74%

Improved performance of structured prompting vs. ad-hoc, depending on technique and task complexity

Sahoo et al., 2024

kenjudy.us | stride.build

"The recommended workflow has four phases. Explore, Plan, Implement, Commit."

— Best Practices for Claude Code (Anthropic)

kenjudy.us | stride.build

The PDCA Cycle Applied to AI Coding

P

PLAN

Analyze codebase. Identify existing patterns and constraints (Breadth-wise).

Plan the implementation before writing any code (Depth-wise).

Developer reviews and approves before proceeding.

D

DO

Test-driven implementation in small, atomic increments. Testing has anti-patterns context.

Enforces "called shots", red-green discipline, architectural safety, and git history preservation.

C

CHECK

Completion analysis: agent reviews session transcript and generated code against original plan. Explicit definition of done beyond functional testing.

Includes a check for refactoring opportunities.

A

ACT

Micro-retrospective. Agent analyzes what worked and suggests targeted refinements to prompts and interaction patterns for the next cycle.

kenjudy.us | stride.build

Working Agreements: Human Accountability in Writing

Commitments made by the developer or collectively by a team

Guidelines for when to intervene

Asserts accountability for code the developer did not write

Refined through retrospection

kenjudy.us | stride.build

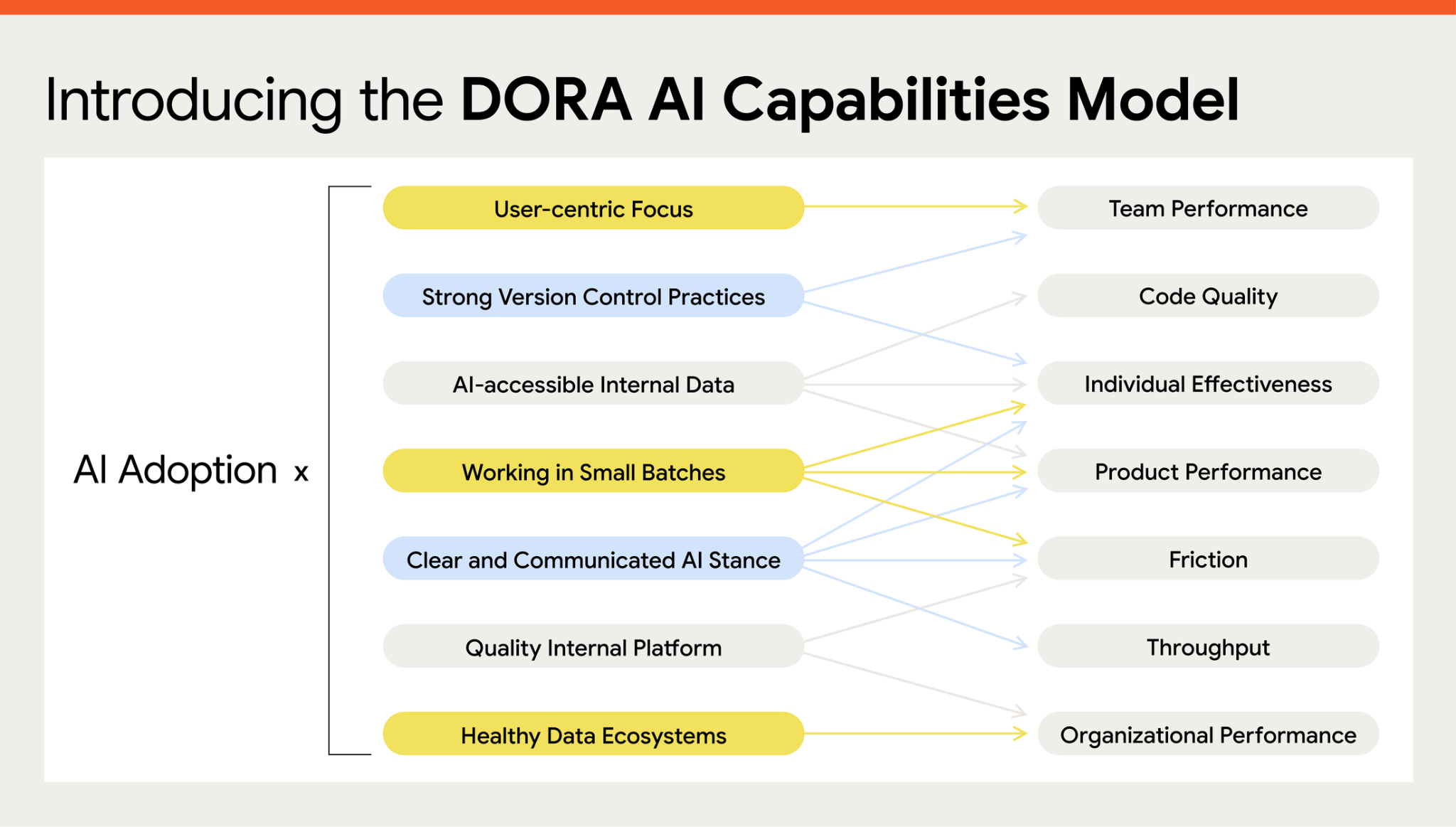

Introducing the DORA AI Capabilities Model

https://cloud.google.com/blog/products/ai-machine-learning/introducing-doras-inaugural-ai-capabilities-model

kenjudy.us | stride.build

PDCA Mapped to the DORA AI Capabilities Model

Plan

Do

Do

User-centric Focus

→

Team Performance

Strong Version Control Practices

→

Code Quality

AI-accessible Internal Data

→

Individual Effectiveness

Working

Agreements

Agreements

Working in Small Batches

→

Product Performance

Clear and Communicated AI Stance

→

Friction

Quality Internal Platform

→

Throughput

Check

Act

Act

Healthy Data Ecosystems

→

Organizational Performance

kenjudy.us | stride.build

PDCA as a Claude Skill/Prompts

The framework's Claude skill/prompt templates and supporting tools are publicly available.

PDCA Framework Prompts

A disciplined framework for AI-assisted code generation

github.com/kenjudy/pdca-code-generation-process

Code Quality Metrics

Script and GitHub Actions for measuring AI Code Drift

github.com/stride-nyc/code-quality-metrics

Steve Yegge's Beads

A memory upgrade for your coding agent

https://github.com/gastownhall/beads

kenjudy.us | stride.build

04

Live Demo

Using Claude Code with author's PDCA skill

kenjudy.us | stride.build

Running the demo

You can run the demo yourself.

Demo Instructions

Setup the necessary requirements and step through the demo

github.com/kenjudy/pdca-code-generation-process/blob/main/presentations/XP%202026/pdca-demo-instructions.md

Presentation Video, Demo Video TBD

I will record the presentation including the demo and the demo in isolation.

kenjudy.us | stride.build

05

Measurement

Detect drift before the debt compounds.

kenjudy.us | stride.build

Measuring AI Adoption Alone Is Dangerous

What most teams measure

Lines of AI-generated code accepted

Pull requests merged per developer

Velocity increase

Tasks completed

What goes unmeasured

Commit size and test discipline trends

Code duplication and churn rates

Delivery stability

Actual value delivered

"When a measure becomes a target, it ceases to be a good measure." (Goodhart's Law)

kenjudy.us | stride.build

The Same Rate of AI Adoption Can Have Different Consequences

Foundational Challenges

low throughput, instability, burnout, and weak product performance

High adoption = accelerating debt.

Output metrics rise while delivery stability falls. AI makes the gap between what teams produce and what they can sustain wider, faster.

High Impact, Low Cadence

Strong outcomes and individual effectiveness, but low delivery frequency and high instability

High adoption = growing invisible risk.

Product metrics look healthy. Delivery instability is building underneath. Adoption metrics will never show it.

Harmonious High-Achievers

High throughput and stability together, low burnout.

High adoption = compounding gains.

Adoption and delivery metrics move together because the strong practices are in place to connect them.

DORA Seven Team Archetypes

kenjudy.us | stride.build

Measuring Code, Platform, and Organizational Health

Measurable from GitHub commit history

Commit size and sprawl

Test-first discipline rate

Velocity trend

Commit message quality

Requires additional tooling or surveys

Delivery stability and throughput (CI/CD data: DX, LinearB)

Code duplication and cloning (AST analysis: GitClear)

AI policy clarity and platform quality (internal audit)

Developer well-being and burnout (team survey)

DORA Seven Organizational Capabilities

kenjudy.us | stride.build

06

Discussion

Your team. Your archetype. Your intervention priority.

kenjudy.us | stride.build

What would it take for you to commit to attempting a structured practice before your next AI coding session?

kenjudy.us | stride.build

Human-centric agile disciplines are not constraints on AI capability. They are a mechanism by which AI capability is converted into delivery value.

kenjudy.us | stride.build

Resources

The PDCA skill and measurement tools are publicly available under CC 4.

PDCA Framework Prompts

github.com/kenjudy/pdca-code-generation-process

GitHub Actions / AI Drift Measurement Tool

github.com/stride-nyc/code-quality-metrics

InfoQ Article

infoq.com/articles/PDCA-AI-code-generation

Agile Alliance Blog Post

agilealliance.org/reducing-ai-code-debt

kenjudy.us | stride.build

Contact Me

Please reach out to learn more or to share your own approaches to agentic coding.

LinkedIn

www.linkedin.com/in/kenjudy/

Github

github.com/kenjudy

Personal Website

kenjudy.us

Employer Website

stride.build

kenjudy.us | stride.build

I

used Claude for brainstorming, argument review, and drafting assistance across multiple revisions. I personally verified all sources and made all final content decisions. I take full responsibility for the accuracy, originality, and quality of this work. [References]

kenjudy.us | stride.build